Agentic workflows are systems where AI can reason through a goal, break it into steps, use real tools, check its own work, and adapt based on what it finds.

If you work with AI seriously, it's worth cutting through the noise to understand what agentic workflows actually involves.

So let's do that. No grand promises. Just a clear-eyed look at what agentic workflows are, where they genuinely help, what can go wrong, and how to think about building them responsibly.

In this article:

- What are Agentic Workflows

- The Core Loop of Agentic Workflows

- What Makes a Workflow Actually "Agentic"?

- A Field Guide to the Most Common Patterns

- Where Agentic Workflows Actually Make Sense

- The Good and Bad About Agentic Workflows

- How to Build This Without Creating Chaos

- A Word on Memory and Context

- Try an Ultimate AI Agent Workspace with Prebuilt Workflows

What are Agentic Workflows (Without the Jargon)

At its simplest, an agentic workflow is a system where AI decides what to do next during execution—based on context and intermediate results—rather than following a completely predetermined script.

That's the key distinction. A traditional automated workflow is like a train on fixed tracks: predictable, efficient, and completely brittle the moment something falls outside its expected path. An agentic workflow is more like giving a capable employee a goal and a set of tools and letting them figure out the best sequence of steps to get there.

But "agentic" doesn't automatically mean fully autonomous, and this is where it helps to have some vocabulary. There's actually a spectrum:

AI workflow

AI is embedded inside a mostly fixed process to handle specific outputs—classifying a document, generating a summary, drafting a response. The flow itself is predetermined.

Router workflow

AI decides which path, tool, or task to pursue next. The structure still exists, but the AI is making routing decisions at runtime.

Autonomous agent

An AI agent can make broader process decisions, create sub-tasks, and dynamically assemble approaches it wasn't explicitly programmed to use.

Most production systems today live somewhere in the first two categories. True autonomous agents—systems that reason and act with minimal human-defined structure—are the subject of active research and carry significant governance challenges.

The distinction matters because treating every automation as an "agent" leads to misplaced expectations in both directions: either dismissing real capabilities or overstating what's actually in production.

The Core Loop of Agentic Workflows

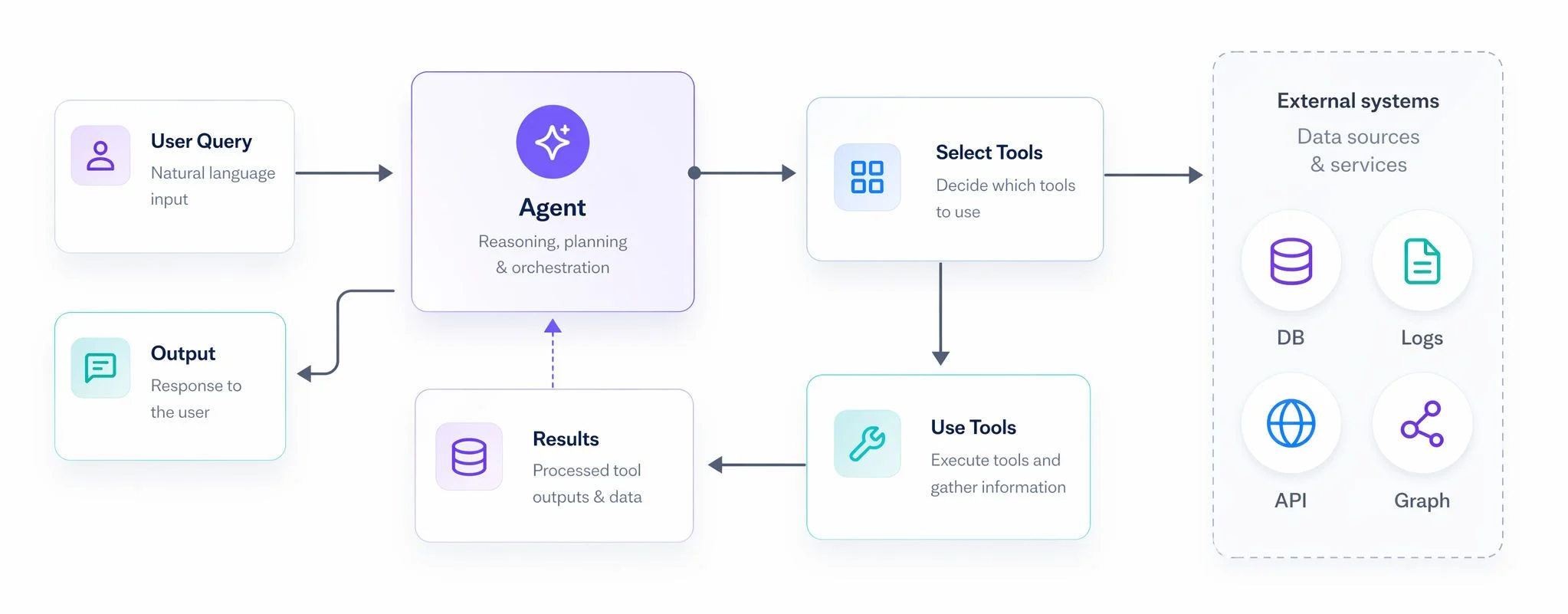

If you remember only one mental model from this article, make it this one: planning → tool use → reflection → orchestration. This four-part loop is what separates an agentic system from a smarter chatbot.

Planning is where the system takes a broad goal and breaks it into a sequence of manageable steps. Instead of trying to answer everything in one shot, it decides: what do I need to find out first? What comes next?

Tool use is where things get genuinely powerful. Rather than generating an answer from internal model knowledge (and risking hallucination), the system calls external tools: a search engine, a database, an API, a code interpreter, an internal system. It gets real information and acts on it.

Reflection is the self-check. After taking an action or producing an output, the system evaluates whether the result actually meets the goal. If not, it revises and tries again. This is typically implemented as a generator-critic dynamic: one part of the system produces output, another critiques it, and the loop continues until the result passes defined quality criteria—or a retry limit kicks in.

Orchestration is the coordination layer: managing memory across steps, passing the right context between components, handling handoffs, and ensuring the overall goal stays on track.

The real power isn't in any single component. It's in the loop. An agentic workflow feels less like a clever prompt and more like a system with genuine problem-solving structure—because that's exactly what it is.

What Makes a Workflow Actually "Agentic"?

Here's a quick concrete test. Suppose you need to investigate why a key metric has dropped. Here's how three different approaches handle it:

-

Deterministic workflow

Runs a fixed sequence of checks, generates a standard report. Works great if the cause is always the same. Falls apart when it isn't.

-

Non-agentic AI workflow

Adds an LLM to summarize the report or classify likely causes. Better, but the AI still sits inside a fixed pipeline—it isn't deciding what to look at.

-

Agentic workflow

The system pulls initial data, hypothesizes likely causes, queries the relevant systems based on those hypotheses, evaluates the evidence, refines its thinking, and escalates or summarizes based on what it actually finds.

The difference is runtime adaptability—the core idea behind agentic AI. The agentic workflow doesn't know ahead of time which systems it will need to query or what the root cause will be—it figures that out as it goes. That's its strength. And it's also why it's overkill for tasks where the path is already fully known.

If a process is stable and predictable, classic automation is almost always the right call. Agentic reasoning is best reserved for the messy, open-ended parts of a problem.

A Field Guide to the Most Common Patterns

There are four frequently used design patterns for agentic systems. Think of these as reusable building blocks.

Planning pattern

The system decomposes a goal into a sequence of actions at runtime. Good implementations make plans testable—each step has a clear success or failure condition, so the system knows when to move on and when to retry or escalate. This pattern shines when a task has multiple dependencies or when the best approach depends on what earlier steps reveal.

Tool-use pattern

Rather than guessing, the system calls structured tools to get real information or take real actions. The engineering discipline here matters: good tools have strict schemas, validated inputs, and structured outputs. Vague tools with loose interfaces are how you end up with an agent confidently taking the wrong action.

Reflection pattern

After producing output, the system evaluates it before committing. A generator produces a draft; a separate critic or judge evaluates it against defined criteria; the system revises if needed. The critical implementation detail: always cap retries. Without a loop breaker, a reflection loop can run indefinitely, eating time and compute while producing diminishing returns.

Multi-agent orchestration pattern

Specialized roles—planner, retriever, executor, validator, reporter—work together under a coordinating orchestrator.

This separation of duties can significantly improve output quality—each component does one thing well. But more moving parts mean more coordination complexity, more places for state to drift, and more things to instrument and debug. Multi-agent setups are powerful; they're also not free.

Where Agentic Workflows Actually Make Sense

Let's get concrete. Here are the use cases where this approach genuinely earns its complexity:

Deep research and information synthesis. When you need to gather information from multiple sources, spot gaps, compare conflicting claims, and produce a structured summary—and when the next best question depends entirely on what was just found—a fixed pipeline breaks. An agentic system can keep probing until the picture is coherent.

Incident investigation and support triage. Pulling logs, querying monitoring systems, testing hypotheses about root cause, narrowing to a likely explanation, and escalating when confidence is too low to act: this is exactly the kind of dynamic, evidence-driven reasoning that agentic systems handle well. Reported survey findings suggest that organizations expect meaningful reductions in resolution time for service workflows where these systems are well-deployed.

Document-heavy work. Contract review, policy analysis, extracting structured decisions from long documents: tool use paired with validation can produce far more reliable results than a single one-shot prompt against a long context.

Operational workflows with exceptions. Most of a process may be fully automatable with standard rules. But edge cases—the ones that require real judgment—are where an agentic layer can pick up the slack. The agent doesn't need to own the whole workflow, just the messy parts.

The Good and Bad About Agentic Workflows

While agentic workflows offer significant advantages, they also introduce unique challenges.

What agentic workflows are good for:

- Adaptability for complex tasks

- Better outputs with valid, real data

- Improved operational efficiency

- Scalability with human checkpoints

Where things can go wrong

- Hallucinations don't disappear

- Security risks grow with capability

- Latency and cost pile up with complexity

- Multi-agent systems are genuinely difficult to debug

How to Build This Without Creating Chaos

The gap between an impressive demo and a production-grade agentic system is wide. Here's what actually bridges it:

Bound the goal tightly. Narrow, well-specified objectives outperform vague ones by a large margin. Use agents for the dynamic slice of the problem, not everything.

Separate deterministic logic from dynamic reasoning. If a step in your workflow is always the same, implement it deterministically. Reserve agentic reasoning for the cases that genuinely need runtime judgment.

Build strong tool contracts. Every tool your agent can call should have clear schemas, validated inputs, and structured outputs. Think of it as the AI equivalent of not letting a teammate act on ambiguous instructions.

Add loop breakers and safe failure modes. Timeouts, bounded retries, fallback paths, and human escalation should be standard, not afterthoughts. Teach the system how to fail safely.

Instrument everything. Logging, traces, and the ability to replay a workflow decision are essential. You need to understand why the system did what it did—especially when something goes wrong. Observability is a feature, not optional infrastructure.

Keep humans in the loop where stakes are high. For financial decisions, legal actions, customer-facing outputs, or anything with meaningful consequences, human approval checkpoints are worth the added friction.

A Word on Memory and Context

An agentic workflow is only as useful as the context it can access. There's a meaningful difference between short-term working memory—the context window within a single session—and longer-term memory that persists across runs.

Most systems today handle short-term memory reasonably well. Long-term memory is harder. Simple similarity-based retrieval works for many cases, but tasks that require connected reasoning—tracking decisions across multiple steps, auditing what the system did and why, or retrieving a web of related facts—benefit from more structured approaches. This is an active area of development, and the right architecture depends heavily on the task at hand.

Try an Ultimate AI Agent Workspace with Prebuilt Workflows

If you're looking for an AI agent that can boost your productivity and help you create, research and present with higher quality outputs, then we suggest you try HIX AI.

HIX AI is your ultimate AI agent workspace. By bringing specialized agents together, it effortlessly manages your most demanding, multi-step projects on autopilot. It strategizes the workflow, executes the tasks, and delivers top-tier results.

What you can achieve with HIX AI is limitless—from conducting deep-dive research and compiling reports/slides from the results, to launching viral marketing assets and coding data-driven websites. Just give HIX AI your objective, and watch it perfectly execute the rest.

⚡ Easy to Use • 💳 No Credit Card Required • 🌟 4.9/5 Rating

The Bottom Line

The most important thing to understand about agentic workflows is that they are a systems design problem, not just a model upgrade. The breakthrough isn't "an AI that does everything autonomously." It's a carefully designed system that can plan, act, check itself, and stay within meaningful boundaries—and that's built with the same engineering discipline you'd apply to any other production system.

The teams that get the most out of this technology won't be the ones chasing the most autonomous agents. They'll be the ones who combine AI's flexibility with software engineering's rigor: clear goals, strong contracts, observable behavior, and genuine human oversight where it counts.

That's not a limitation. That's what makes it work.